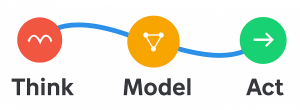

I’ve been experimenting with the Microsoft’s new MAF - but with a twist. Instead of using cloud models or online APIs, I set out to see whether MAF could operate completely offline using local components only. The setup: ● Ollama (with Llama3.1:8b) locally on localhost:11434 ● docling MCP server for PDF-to-Markdown conversion locally on localhost:8000 ● MAF Agent orchestrating the workflow ● Amazon EC2 (g6.2xlarge) - Ubuntu (Deep Learning - 20.04) Ollama & Llama3.1:8b (localhost:11434) ---- MAF Agent ---- docling MCP (localhost:8000) The agent runs fully offline, successfully using Ollama as its LLM and calling the docling MCP server via an MCPStreamableHTTPTool - all within a self-contained Ubuntu EC2 instance. While I’m still troubleshooting docling’s PDF parsing logic (it’s not quite clear to me where the results of the conversion are 😀), all communication and tool invocation works great - confirming that MAF can apparently operate without cloud dependencies. This small experiment shows how local-first AI Agent architectures are not only possible but practical - blending Microsoft’s more elegant agent orchestration (since combining the best of Semantic Kernel and AutoGen) with self-hosted models and tools. After building PoCs and demos with LangFlow, Copilot Studio, and now MAF, it’s clear to me that the agent ecosystem has matured - what felt experimental a year ago is now starting to feel genuinely usable. Code is here: https://lnkd.in/gqWcpta4 (feel free to offer candid observations). NOTE: Thanks to Pamela Fox’s insights here (https://lnkd.in/gFwBz9tV) for making me question my initial approach. UPDATE: After this weekends work, using the qwen3:8b model, the extract is working fine and I’m able to pull questions and requirements from an RFP and get draft answers and guidance. Next up: combing with RAG to give relevant answers and I am close to having an automated draft RFP responder (all offline and using MAF).

All Insights

October 2025

Sunday Coffee & Code: Running the new Microsoft Agent Framework (MAF) Completely Offline - with Ollama + Docling via MCP

I’ve been experimenting with the Microsoft's new MAF - but with a twist. Instead of using cloud models or online APIs, I set out to see whether MAF could operate completely offline using local components only.

By Steve Harris

Want to Discuss This Topic?

Steve is always happy to have a direct conversation.