This week focused on performance benchmarking, architectural consolidation, and refining the quality of the automated response across the 5 agents (soon to be 6). All still inside Microsoft Agent Framework (MAF) with A2A and MCP. - Tested with Qwen3:30b model on an AWS g6.2xlarge instance. Was simply too much for the server (bouncing between 0%-5%-10% GPU usage with a slammed CPU) - at least I know where the boundary is now (14b model sits nicely in the GPU running at about 97%-98%). - Sorted out the RAG database with a shared ChromaDB instance - way better answers now. - Moved to OpenAI API (gpt-5.2) with exceptional results. A draft RFP response now takes only 10 minutes. Requires approximately 185k tokens and costs roughly $1.10 per draft (fast and cheap). - Added client context processing. This allows the system to ingest an organization’s strategic priorities and annual reports to align the response with their specific goals and approaches. - Additionally, I (we - Claude Code and I) added industry-specific personas - including healthcare, government, financial services and First Nations - to provide a more sophisticated industry context for the QA agent (with overall & red/green light scoring). Next on the roadmap: the addition of a new agent designed to take the structured feedback from our QA agent and automatically rework the original draft - getting to a more polished draft even more quickly. (Claude Code is working on it right now - in fact just committed the change.)

All Insights

January 2026

Sunday Coffee & Code: It's alive!!! (& stable)

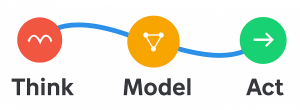

This week focused on performance benchmarking, architectural consolidation, and refining the quality of the automated response across the 5 agents (soon to be 6). All still inside Microsoft Agent Framework (MAF) with A2A and MCP.

By Steve Harris

Want to Discuss This Topic?

Steve is always happy to have a direct conversation.