Last weekend I was down a logprobs rabbit hole, trying to measure model confidence a bit more honestly, this weekend was about visualising it. I have been experimenting with a chart that plots the probability of the chosen token against the next-best alternative as a sentence unfolds (link in the comments - video snippet below, each vertical bar represents a token). It starts to show where the model (Meta llama3.1:8b with Ollama in this case) was steady, where it was torn between two options, and where it may have made a slightly shaky choice and then carried on very confidently. What was also interesting was the larger gaps. It seems to not just show separation between the chosen token and the runner-up but also hinting at residual probability mass sitting outside those top two choices, which suggests the model was not simply deciding between two options, but was more broadly uncertain. Still early, but it feels useful (Anthropic Claude Code did a great job again). Last week was about trying to measure confidence more honestly and this week was about trying to make that uncertainty visible. A good Sunday mix of coffee, code, and staring at token gaps for longer than is probably healthy 😀. (GitHub repo: https://github.com/steveh250/logprobs) Earlier text representation of the above HTML.

All Insights

GenerativeAI

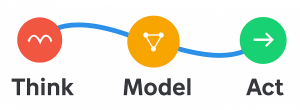

April 2026Sunday Coffee & Code: from measuring model to confidence to seeing it

Last weekend I was down a logprobs rabbit hole, trying to measure model confidence a bit more honestly, this weekend was about visualising it.

By Steve Harris

Want to Discuss This Topic?

Steve is always happy to have a direct conversation.