One of the most important mindset shifts with Generative AI is that hallucinations are not a strange side effect that appears only when a model “goes bad.” They are tied to how these systems work. Large language models generate text by predicting the next token, not by checking whether a statement is true in the way a rules engine would. That is why they can sometimes produce something fluent, plausible, but wrong. The goal is not to pretend this problem will disappear. The goal is to learn how to work with it responsibly.

What is it?

A hallucination is when a model produces content that is inaccurate, fabricated, or misleading, but presents it in a way that sounds convincing. It might invent a source, misstate a fact, blend two ideas together, or fill in a gap with something that looks reasonable but is not actually grounded. That is why I think the word “comfortable” matters here. Not comfortable in the sense of accepting poor quality. Comfortable in the sense of understanding what is happening, where the risk sits, and what controls to put around it. A common misconception is that hallucinations mean the model is broken. That’s not the most useful way to see it. These systems are built on probabilistic next-token prediction. They are exceptionally good at producing likely language. “Likely language” and “correct answer” are not the same thing. That distinction matters a lot in practice. If you ask a model for brainstorming help, naming ideas, first-draft wording, or alternate ways to explain something, a degree of probabilistic creativity is actuall useful. But if you ask it for references, policy interpretation, safety advice, procurement clauses, or numbers that will drive a decision, the same behaviour becomes a risk. That was why I explored the subject this weekend in experiment on token logprobs. I was looking at whether programmatic signals, such as token probabilities, weakest-link scoring, runner-up margins, and what I described as a hallucination snowball effect, might provide a more grounded signal than simply asking the model to rate its own confidence.

What does it mean from a business perspective?

This is not just a technical quirk. It is an operational reality that affects trust, governance, and adoption.

- You cannot treat Generative AI like a traditional deterministic system. It does not return the same kind of predictable, fixed answer that people are used to from conventional software like Excel or SQL queries. That means organisations and people need different habits, controls, and expectations.

- The real business issue is not whether hallucinations exist. They do. The real issue is whether people know when they matter, and whether the work is designed to catch them before they cause harm.

- Risk varies by use case. A weak first draft for an internal brainstorm is very different from fabricated citations in a board paper, procurement response, policy document, or client-facing recommendation. The same tool can be low risk in one setting and unacceptable in another.

- Trust is shaped by experience. If staff hit a few confident-sounding wrong answers early on, adoption can stall quickly. Time savings alone do not rebuild trust once people feel they have been burned. This is partly why reliable evaluation and responsible reporting still matter so much in the field.

- Leaders need to think in terms of guardrails, not perfection. The right question is usually not “ Can we eliminate hallucinations? ” It is a risk based “ What level of checking, grounding, and human review does this use case require? ” That framing is much more practical.

- There is also an opportunity hidden in this. Teams that understand the behaviour of these systems tend to use them more effectively. They design workflows that get the speed benefits without blindly trusting every output and use creativity (hallucinations in other words) effectively.

What do I do with it?

You do not need a perfect solution to make progress. You do need a few sensible habits and some use-case appropriate controls.

- Ask for sources, references, and uncertainty explicitly. This does not guarantee truth, because models can fabricate citations too, but it is still useful. Treat citations as review material, not proof by themselves (lots of models show actual citations now anyway).

- Use research-oriented workflows when accuracy matters. Deep research models, retrieval, and tool-grounded workflows can improve reliability because they bring external sources into the process instead of relying only on the model’s internal patterns. That is usually a better fit for factual, high-consequence work than simple prompting alone. In the Microsoft Copilot Agent world this can be enhanced with some Agent controls:

- Ask the model to review and challenge its own output. A useful pattern is draft first, then ask for critique, missing assumptions, weak spots, unsupported claims, and what should be verified by a human. This is not a silver bullet, but it often improves output quality by creating a second pass.

- Keep a human in the loop where consequences are real. For policy, legal, financial, procurement, HR, safety, or public-facing material, human review should be part of the workflow, not an optional extra. That is where accountability still sits. (See link in Further Reading on Risk.)

- Use programmatic checks where you can. For higher-value workflows, add validation steps such as fact checks against trusted sources, schema validation, rule checks, link checking, or probability-based review signals. My own recent logprobs experiment came from exactly this line of thinking: do not just ask the model if it feels confident, look for measurable warning signals in the output itself (even though more modern public systems like Gpt-5.x have stopped providing logprobs - some ‘open source’ models still seem to provide them).

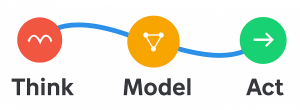

- Train people on how to use the tool, not just where to click. Staff need to understand that a fluent answer is a draft to work with, not an automatic truth source. Hallucinations are not a sign that Generative AI is useless. They are a sign that we need to use it with the right mental model. These systems generate probable language, not guaranteed truth. Once you understand that, you move away from blind trust or blanket fear, and toward practical controls, better workflows, and more deliberate adoption. That is usually where real value starts.