A lot of GenAI discussion is still in the “ help me do this task faster ” phase. That made sense for the first wave. But we are now moving to something bigger and more disruptive. First, GenAI helped people with tasks. Now it is starting to take on processes. Next, it will start to reshape, compress, and in some cases replace parts of, or complete roles. I do not think most organisations are preparing for that shift with anything like the seriousness it deserves.

What is it?

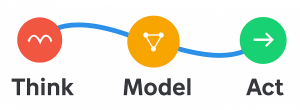

The clearest way I have found to think about this is through three levels of impact: tasks, processes, and roles.

1. Assistants and copilots help with tasks

This is the world most people are familiar with. You ask for help drafting an email, summarising a document, rewriting a paragraph, producing a first pass at analysis, or cleaning up a presentation. The human is still doing the job. The AI is helping with individual tasks inside that job.

That is where most mainstream GenAI adoption started, and in many organisations it is still where most adoption sits today. Extremely useful and about to be changed.

2. Cowork-style systems can take on processes

This is where things start to change more materially. A process is not just one task. It is a connected sequence of tasks that together produce a business outcome.

That is why tools like Claude Cowork and Copilot Cowork matter. Handling tasks autonomously across your computer, local files, and applications to return a finished deliverable. Microsoft Copilot Cowork can schedule meetings, create documents, post in Teams, and manage calendar activity, with approval before actions happen.

That is a very different category from “help me write this paragraph.” It is much closer to: take this objective, figure out the steps, work through the sequence, assemble the output, and bring me something close to finished - a complete process.

That shift was brought home to me very directly this week. Copilot Cowork , through Microsoft’s Frontier program, landed in my tenant. I used it to create a project status report from my emails and, within minutes, it had thought through the task, planned the work, and produced something that was more than 95% complete, including the PowerPoint deck.

That is not just task support - that is process execution.

3. Role-level agents point toward role replacement or compression

The next step is more significant again. Once a system can reliably handle enough processes within a function, it starts to affect the role itself - not just one task, not just one workflow. The role.

This is where tools like OpenClaw become interesting as a signal. OpenClaw is described as a locally running AI assistant that operates directly on your machine, in other words, it starts to look less like a single helper and more like a configurable digital worker environment. I treat this as an early indicator rather than a mature enterprise standard. But the direction is important.

When you can configure agents with distinct responsibilities, tools, context, state, and access, you are no longer just helping a person with work. You are starting to create something that can stand in for significant portions of a role. That does not necessarily mean whole jobs will disappear overnight.

It does mean:

- Role boundaries change

- Skill expectations change

- Management span can change

- Staffing assumptions can change

- The amount of work one person can supervise can change

I would be very surprised if we do not see a Microsoft-style enterprise-protected version of this kind of role-level agent pattern emerge more explicitly this year. (My speculation, not a Microsoft announcement.)

What does it mean from a business perspective?

This is not just a tooling shift. It is a work design shift.

- You need to distinguish the level of impact. If you treat task tools, process tools, and role-level agent systems as the same thing, you will underestimate both the opportunity and the risk.

- Task-level adoption tends to create local productivity gains. People get faster. Quality may improve. Friction may reduce. But the operating model often stays largely intact.

- Process-level adoption starts to change throughput and workflow design. This is where cycle times, handoffs, review stages, service levels, and delivery expectations change again.

- Role-level adoption affects organisational design. Once AI can take on enough recurring process, the question becomes: what is the human role now? Supervision? judgment? exception handling? stakeholder engagement? final approval? all of the above?

- Governance gets more serious as you move up the stack. A poor draft email is annoying. A flawed process execution can cause operational or reputational harm. A badly governed role-level agent can create permission, audit, accountability, and control problems very quickly.

- Change management becomes more human, not less. People can absorb task tools fairly easily. Process and role shifts create uncertainty, identity questions, political resistance, and management challenges.

- Leaders need to decide deliberately where to stop. Just because a process or role can be compressed does not mean it should be. Some work benefits from, and requires, human friction and human accountability.

- The strategic risk is accidental adoption. If leaders do not make explicit decisions, staff will still experiment, workflows will still drift, and AI will still start reshaping work, just without clear boundaries or oversight.

What do I do with it?

You do not need to solve the whole future this quarter. But you do need a broader view than “ AI helps people work faster. ”

- Map your current AI use into the three levels. What are you using for tasks? What are you piloting for processes? Where do you already see early signs of role compression or redesign?

- Stop evaluating all AI tools with the same criteria. A task assistant should be judged differently from a process executor. A process executor should be judged differently from a role-level agent system.

- Pilot at process level on purpose. Pick a process that is repetitive, useful, and reasonably bounded. Status reporting, research collation, internal communications, structured document creation, and meeting follow-up are good examples.

- Document the human checkpoints. Decide where approval sits, where review is mandatory, and what exceptions should always escalate to a person. What is your approach to ensuring friction (HITL) remains in the right processes?

- Start reviewing roles now. Ask which parts of roles are true judgment work, which parts are relationship work, and which parts are mostly process overhead. That distinction will matter much more.

- Prepare managers for redesign, not just staff for tools. The real challenge is often not whether someone can use the feature. It is whether managers understand how work will change (What will departments look like?).

- Strengthen governance before broad rollout. Permissions, audit, privacy, data handling, escalation paths, and acceptable-use guidance all need more attention once the system can act across tools and content.

- Use the “tasks, processes, roles” model in leadership conversations. It is simple, but it helps people quickly see that not all GenAI impact is the same.

The upcoming mistake is to talk about all GenAI as though it is still just a better assistant. It is not.

Assistants and copilots help with tasks. Cowork-style tools can take on processes. Role-level agent systems are beginning to point toward a world where parts of roles are redefined or replaced. That is the shift. And the organisations that do best over the next year or two will be the ones that recognise those are three different levels of change and prepare accordingly.